The AI Model Explosion: How March 2026 Kicked Off the Agentic Era

If you blinked during the first two weeks of March 2026, you missed a lot. Twelve-plus major AI model releases in seven days. A benchmark milestone that put a machine above human expert level on economically valuable tasks — for the first time. The Model Context Protocol crossing 97 million installs. And underneath all of it, a fundamental shift in what AI is actually for.

We’re not in the chatbot era anymore.

</figure>

The Week That Changed Everything: March 1–8

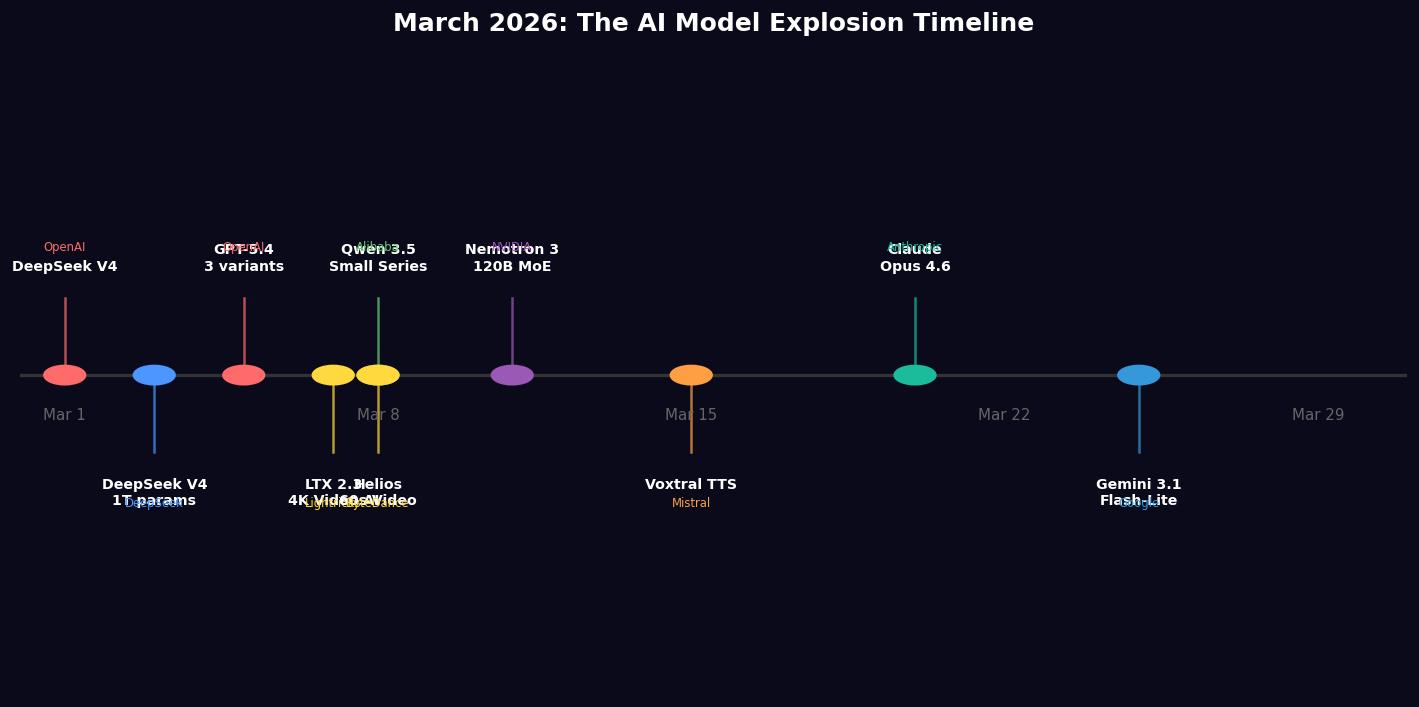

On March 1, 2026, DeepSeek quietly dropped V4. By March 8, the following had also landed: GPT-5.4 in three variants, LTX 2.3, the Qwen 3.5 Small Series from Alibaba, ByteDance’s Helios, and more. In total, observers counted at least 12 major model announcements from organizations including OpenAI, DeepSeek, Alibaba, Lightricks, Tencent, Meta, ByteDance, and several universities — all within a single calendar week.

That’s not a coincidence. It’s a signal about the state of the field. The development cycles have compressed to the point where frontier organizations are racing on overlapping timelines, and the releases are landing in clusters.

Let’s go through the standouts.

GPT-5.4: Human Expert Level on the Benchmarks

OpenAI’s GPT-5.4 (released March 5) arrived in three variants — Standard, Thinking, and Pro — and the headline was the context window: up to 1.05 million tokens across the range. That’s 50 to 100 times longer than prior generations were managing, and it has real consequences for how these models can be deployed. Long documents, multi-session memory, extended codebases — suddenly tractable in a single call.

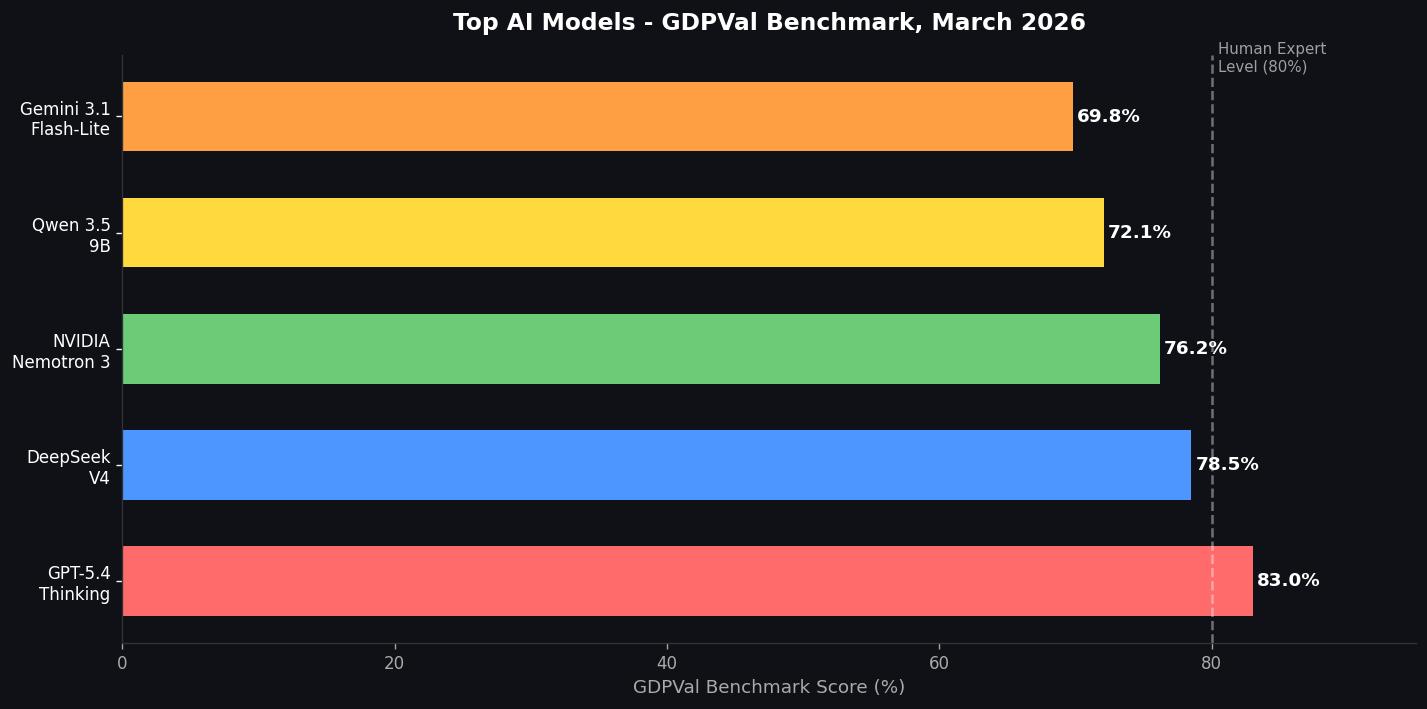

But the number that got the most attention was the benchmark score. GPT-5.4 Thinking scored 83.0% on the GDPVal benchmark, which attempts to measure performance on economically valuable tasks — the kind of professional work that actually matters to businesses. That puts it at or above the human expert baseline for the first time recorded on that benchmark.

That framing matters. “Human expert level” on a task-oriented benchmark is a very different claim than “passed the bar exam.” It’s a direct statement about economic utility, and the industry took notice.

DeepSeek V4: A Trillion Parameters, 32 Billion Active

DeepSeek V4 is built around an architecture that’s becoming increasingly common at the frontier: massive total parameter count, tiny active footprint. The model packs 1 trillion parameters but uses only 32 billion active parameters per token during inference. The efficiency gains this unlocks — both in cost and latency — are what make trillion-parameter models practical rather than just impressive.

The Mixture-of-Experts approach (where only a subset of the network activates per forward pass) is becoming the dominant paradigm for scaling, and DeepSeek V4 is one of the most aggressive deployments of it yet.

NVIDIA Nemotron 3 Super: Built for Agents

NVIDIA’s entry into the March release wave wasn’t a general-purpose chat model — it was designed from the ground up for multi-agent applications. Nemotron 3 Super is a 120-billion-parameter Mixture-of-Experts model with only 12 billion active parameters per forward pass, and it shipped alongside NVIDIA’s broader Agent Toolkit and the OpenShell open-source runtime.

The name is deliberate. NVIDIA is positioning this as infrastructure for the agentic layer, not a competitor to GPT or Claude in conversation. The distinction matters more and more as the industry’s center of gravity shifts toward deployment patterns where models are acting as automated workers rather than responding to humans in real time.

</figure>

Alibaba Qwen 3.5 Small Series: On-Device Frontier Performance

Qwen 3.5’s 9B variant does something that would have been implausible 18 months ago: it matches or surpasses models 13 times its size on standard benchmarks, and it runs entirely on-device — on smartphones and laptops, without a cloud call. This is partly an architecture story and partly a training data story, but the practical implication is significant.

The era of needing a massive inference cluster to run a capable model is ending at the small end of the scale. When a 9B model running locally can compete with a 120B model from two years ago, the deployment calculus for a huge number of applications changes.

LTX 2.3 and Helios: Video Generation Goes Real-Time

Two March releases pushed video generation into genuinely different territory.

Lightricks LTX 2.3 is a 22-billion-parameter open-source model that generates 4K video at 50 FPS with synchronized audio in a single pass. The synchronized audio component is new — prior video models treated audio as a separate problem. LTX 2.3 treats it as a unified generation task.

ByteDance and Peking University’s Helios takes a different approach: it generates full 60-second videos at real-time speed on a single GPU. The compute efficiency story there is dramatic. Running video generation at real-time speed on commodity hardware opens up production workflows that were previously only available to teams with serious infrastructure.

The Real Story: 97 Million MCP Installs and the Agentic Shift

The model releases are the easy-to-report part of what’s happening in March 2026. The harder-to-quantify-but-more-important part is the infrastructure shift underneath them.

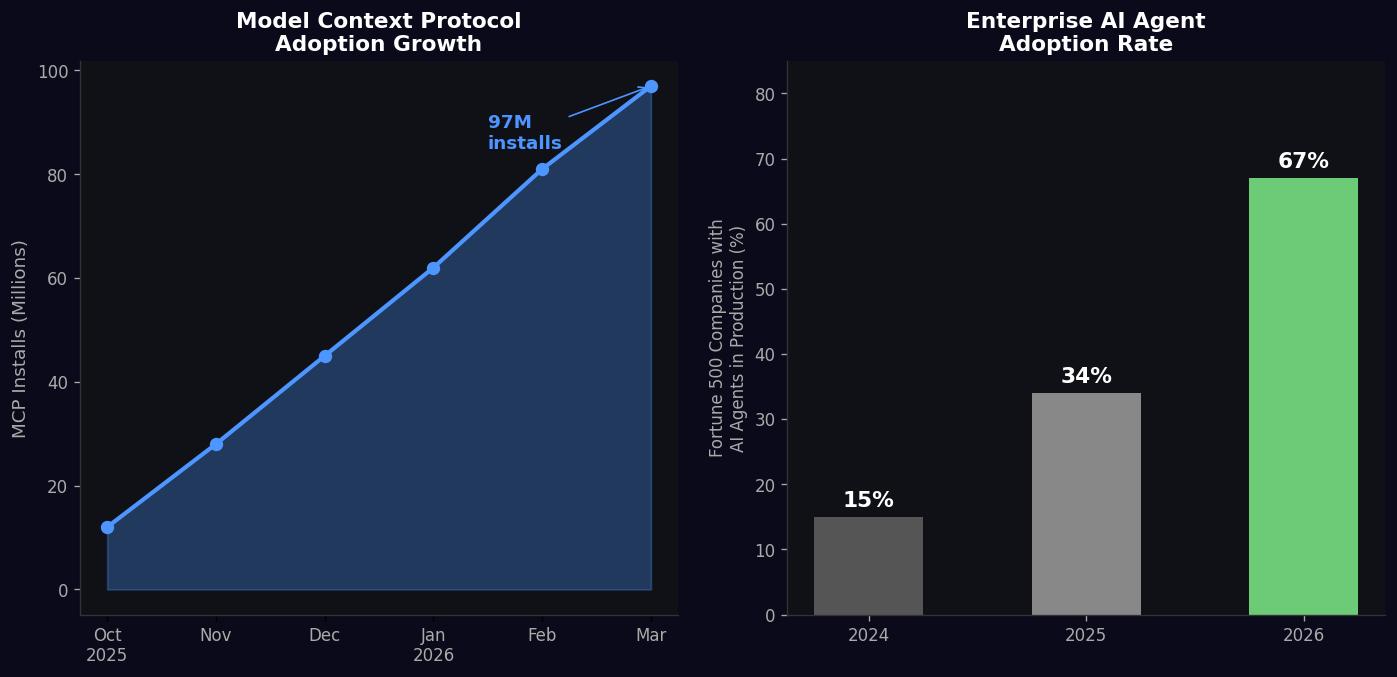

The Model Context Protocol (MCP) — the open standard for connecting AI models to external tools, data sources, and APIs — crossed 97 million installs as of March 25. When MCP launched in late 2024, it was an interesting developer experiment. At 97 million installs across frameworks, IDEs, enterprise deployments, and production applications, it’s foundational infrastructure.

</figure> Why does this matter for the model release story? Because it’s the connective tissue. A language model on its own answers questions and generates text. A language model connected via MCP to your calendar, your codebase, your databases, your communication tools, and your business systems is something different: it’s an autonomous worker that can act across your stack.

The enterprise adoption numbers back this up. 67% of Fortune 500 companies now have at least one AI agent running in production as of March 2026, up from 34% in 2025. That’s not experimentation anymore — that’s operational dependency.

Microsoft’s release of Copilot Cowork (delegated multi-step task execution) and Agent 365 (governance infrastructure for agents across organizations) in March is a signal from the world’s largest enterprise software vendor: the focus has shifted from “AI that helps you write emails” to “AI that executes workflows on your behalf.” Those are fundamentally different products with fundamentally different risk profiles, which is why the governance layer is getting built out in parallel.

What the Acceleration Actually Means

Twelve model releases in a week is startling, but it obscures what’s actually interesting about March 2026. The more significant thing is the convergence of capability, infrastructure, and enterprise adoption all hitting inflection points simultaneously.

</figure> A few things to watch heading into Q2:

The context window arms race is effectively over at the frontier. When GPT-5.4 ships with 1 million tokens and that’s expected rather than surprising, the marginal value of longer context has diminished. The next differentiator is what the model does with that context window — reasoning quality, instruction-following, and reliability under complex multi-step tasks.

On-device models are a genuine strategic bet, not a marketing positioning. Qwen 3.5’s 9B running locally and matching much larger cloud-hosted models changes the privacy calculus for enterprises. A model that never sends data off-device solves a category of compliance problems that no amount of SLA guarantees from cloud providers can fully address.

The compute efficiency story is the real benchmark. DeepSeek V4’s trillion parameters / 32B active, NVIDIA Nemotron 3’s 120B / 12B active, Helios running at real-time speed on a single GPU — the real competition in 2026 isn’t who has the biggest model, it’s who can deliver the best performance per dollar of inference compute. That’s the metric that determines which organizations build sustainable businesses on top of AI.

MCP at 97 million installs means agentic AI is infrastructure, not a feature. When the protocol for connecting AI to external systems is that widely deployed, you’re past the “interesting experiment” phase. The next 12 months will be defined by what gets built on top of that installed base.

March 2026 won’t be remembered as the month that AI “arrived” — that’s a retroactive framing that flattens too much. It’ll be remembered as the month it became undeniably clear that the conversational assistant era was ending and the agentic era was beginning. The model releases are evidence. The 97 million MCP installs are evidence. The 67% Fortune 500 production deployment figure is evidence.

The question now isn’t whether AI agents will be embedded in enterprise workflows. They already are. The question is how fast the organizational adaptation catches up to the technical capability.

Based on March’s pace, probably faster than most people expect.

Sources: Build Fast With AI — 12+ Models in March 2026 · Digital Applied — March 2026 AI Roundup · Crescendo AI — Latest AI News · Moltbook AI — AI Agents March 2026

Enjoy Reading This Article?

Here are some more articles you might like to read next: