OpenClaw's 'ChatGPT Moment' and the AI Model War Nobody Saw Coming

Something happened in early March 2026 that reminded a lot of people of November 2022. Back then, OpenAI released ChatGPT and the internet briefly lost its mind. A simple chat interface crossed a million users in five days. Everyone suddenly had opinions about large language models.

OpenClaw isn’t a chatbot. It’s something arguably more significant: an open-source, autonomous AI agent that runs locally on your machine, connects to your messaging apps, and can actually do things on your behalf. And in 60 days, it accumulated 318,000 GitHub stars — a pace of adoption that Jensen Huang, Nvidia’s CEO, called “probably the most important software release, you know, probably ever.”

That’s a big claim. But the numbers back up the hype in ways that are hard to dismiss.

</figure>

What Is OpenClaw, Actually?

OpenClaw (formerly called Clawdbot, then Moltbot) was first published in November 2025 by Austrian developer Peter Steinberger. The project’s mascot is a lobster — hence the name — and the tagline is “Any OS. Any Platform. The lobster way. 🦞”

Underneath the whimsy, the architecture is genuinely interesting. OpenClaw connects large language models to your actual software — your files, your browser, your APIs, your messaging apps. It uses a “skills” system where discrete capability bundles are stored as directories, each containing a SKILL.md file that tells the model what the skill does and how to use it. Install a new skill, and the agent gains new capabilities. It’s modular in a way that existing closed-source agent platforms aren’t.

The multi-platform integration is remarkable in its breadth: WhatsApp, Telegram, Slack, Discord, Signal, iMessage, Microsoft Teams, Matrix, and a dozen more. Your OpenClaw agent lives wherever you communicate. You don’t go to it — it comes to you, in whatever app you already use.

The practical results speak for themselves. The project recorded 2 million site visits in a single week. 57,000 forks. 1,100 contributors. 129 startups bootstrapped using OpenClaw infrastructure, generating a combined $283,000 in monthly revenue within 30 days of launch. The developer ecosystem didn’t just adopt it — it built on it.

Shortly after the viral explosion, Steinberger announced he would join OpenAI to focus on next-generation agents, while OpenClaw continues as an open-source project. Which is either a validation of the technology or a cautionary tale about what happens to indie projects when the big labs come calling. Probably both.

The “ChatGPT Moment” for Agentic AI

Huang’s endorsement at Nvidia GTC came with context. He wasn’t just complimenting a popular repository — he was making a structural argument: that OpenClaw is to agentic AI what the original GPT API was to conversational AI. It unlocks something. It makes a capability that was previously theoretical and complex into something a developer can spin up in an afternoon.

That framing matters because the industry has been talking about “agentic AI” for two years without anyone quite cracking the mass-adoption problem. The bottlenecks were always UX and trust: you can build an agent that browses the web and fills forms, but getting normal people to actually let it do that is hard. OpenClaw’s answer is to route everything through messaging apps people already trust and use. Instead of a new interface paradigm, it’s a familiar one — a chat conversation — with dramatically expanded capabilities underneath.

The Gartner analysts who called OpenClaw’s design “insecure by default” aren’t wrong, technically. Letting an AI agent with access to your email and financial accounts take autonomous actions creates real risk. But they’re probably fighting the tide. The history of consumer technology is the history of convenience winning over security concerns, and OpenClaw is very convenient.

Meanwhile: The Biggest Model Battle in AI History

OpenClaw’s rise isn’t happening in a vacuum. March 2026 has been one of the most consequential months for AI model releases in the technology’s short history. Let’s talk about what’s actually been shipped.

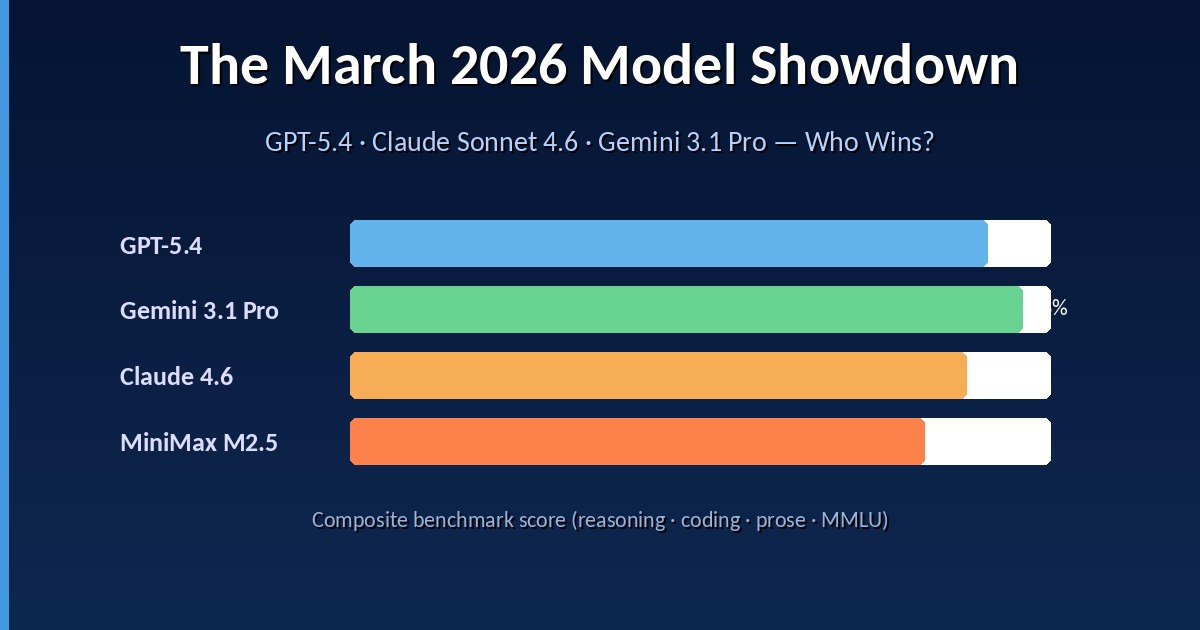

</figure> GPT-5.4 (released March 5) is OpenAI’s headline entry. The 1.05 million-token context window is genuinely useful for enterprise document processing use cases. But the more significant feature is native computer use: GPT-5.4 can control a computer on your behalf — browse the web, fill forms, run applications, execute workflows. This is a genuine agentic tool, not just a better chatbot. Three variants (Standard, Thinking, Pro) cover different price/capability points, and OpenAI reports 33% fewer factual errors compared to GPT-5.2.

Gemini 3.1 Pro is, by benchmark consensus, the strongest general-purpose model available right now. Its ARC-AGI-2 score of 77.1% is particularly notable — ARC-AGI-2 tests pure logical reasoning that can’t be memorized, so that number reflects something real about the model’s capabilities. Google dominates 13 of 16 major performance benchmarks. Whether that translates to real-world superiority depends heavily on task type, but for reasoning-heavy work, Gemini 3.1 Pro is the current leader.

Claude Sonnet 4.6 from Anthropic has done something economically significant: it demonstrated that a $3/million-token model can match the quality of $15/million-token flagships on most tasks. That’s a watershed moment for enterprise buyers, and it’s a big part of why Anthropic reportedly flipped from 40% to 73% market share among first-time enterprise customers in just three months. The multi-agent parallelism in Claude Code — where multiple Claude instances coordinate on different parts of a project simultaneously — is also genuinely useful for complex engineering workflows.

MiniMax M2.5 from China has been praised for rivaling Claude Opus 4.6 while costing significantly less. Combined with DeepSeek V4 (looming on the horizon), the Chinese open-weight challengers are closing the quality gap faster than most Western analysts expected.

The summary: there has never been a month with this density of genuinely significant model releases, and the models are all extraordinary by any historical standard.

The Uncomfortable Truth: AI Is Becoming a Commodity

Here’s the thread that connects all of the above, and it’s the story that makes AI labs nervous.

</figure> The cost to achieve a given benchmark performance level has been falling at a median rate of 5–10× per year for frontier models. DeepSeek’s January 2025 arrival priced at $0.14 per million input tokens — versus GPT-4o’s $3.00 at the time — triggered cascading price cuts across the industry. Today, the 25× gap between the cheapest and most expensive frontier model is the biggest structural fact in enterprise AI procurement.

The CNBC headline from March 21 captures the anxiety: “OpenClaw’s ChatGPT moment sparks concern that AI models are becoming commodities.” The concern is rational. If DeepSeek’s $0.27/million model achieves 90% of GPT-5 quality, the premium pricing that funds frontier research becomes harder to justify. Labs that built their moats on model capability are discovering that the moat erodes faster than expected.

Tobias Pfuetze’s widely-shared Medium post, “The Model Commoditisation Trap,” makes the logical next step explicit: “Selecting the right foundation model is rapidly becoming the least important strategic decision in enterprise AI.” The moat is everywhere else — the data, the integrations, the fine-tuning, the workflow design, the trust and regulatory compliance infrastructure that enterprises actually need.

This is good news for users and bad news for labs that bet everything on model performance. And it’s why the agentic layer — where OpenClaw operates — is suddenly the most contested ground in AI. When the models are roughly equivalent, the platform that makes them useful in the real world wins.

What This Means for the Rest of 2026

The throughlines emerging from March 2026:

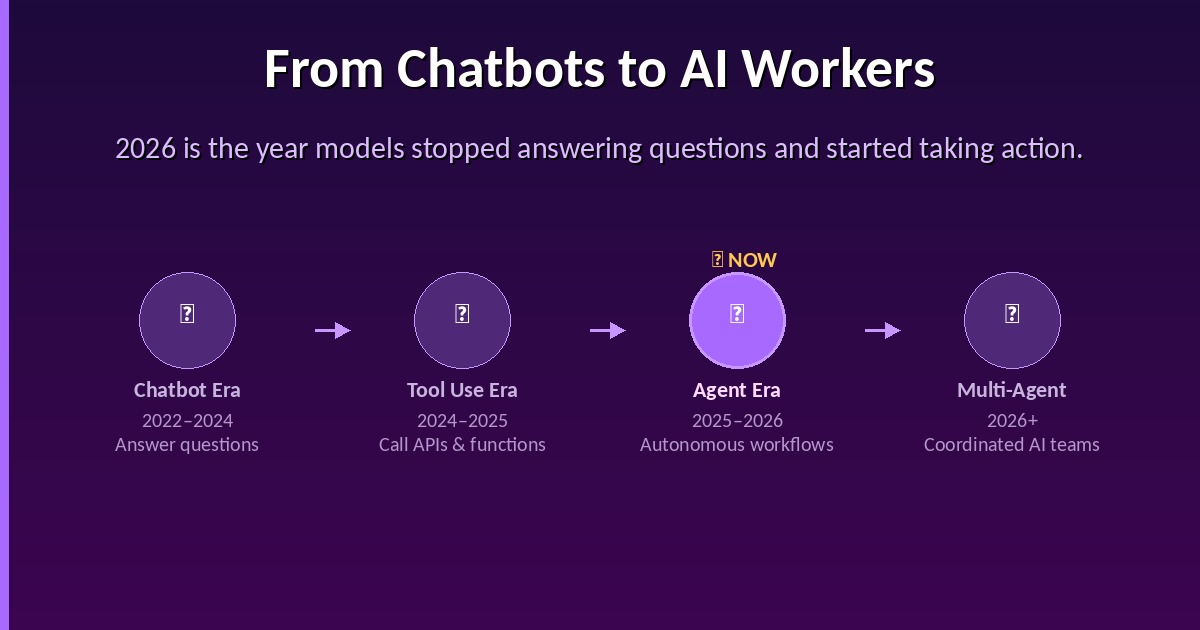

Agentic AI is no longer a research concept. OpenClaw made it real and accessible. GPT-5.4 shipped native computer use. Claude Code’s multi-agent parallelism is in production. The question has shifted from “can AI agents work?” to “how do we deploy them responsibly and at scale?”

The frontier model gap is closing. The practical differences between GPT-5.4, Gemini 3.1 Pro, and Claude Sonnet 4.6 on most everyday tasks are increasingly marginal. Specialization is the new differentiation — Grok 4 and Claude lead on coding, Gemini leads on reasoning, Claude writes the most natural prose. You pick the model for the task, not the brand.

Open source is punching up. OpenClaw’s 318,000 stars didn’t come from enterprise budgets — they came from developers who wanted something powerful, free, and under their own control. The open-source AI ecosystem in 2026 isn’t playing catch-up with closed-source anymore. It’s setting the pace on certain dimensions.

China is a factor that Western analysis keeps underestimating. Fortune’s piece on how OpenClaw went viral in China — “Raise a lobster: How OpenClaw is the latest craze transforming China’s AI sector” — and the MiniMax/Qwen/GLM lineup of competitive models are both evidence of a parallel AI ecosystem that moves fast and increasingly shows up on the same benchmarks as Western frontier labs.

</figure> The transition from “AI as chatbot” to “AI as worker” is the story of 2026. OpenClaw is the clearest consumer-facing signal of that transition. The model wars — GPT-5.4 vs. Gemini 3.1 Pro vs. Claude Sonnet 4.6 — are the enterprise-facing signal. The commoditization trend is the economic signal.

They’re all pointing in the same direction. The chatbot era is ending. Something more capable, more autonomous, and more consequential is beginning. Whether that’s exciting or alarming probably depends on which side of the automation equation you’re on.

Sources: KDnuggets — OpenClaw Explained · CNBC — OpenClaw’s ChatGPT Moment · CNBC — Jensen Huang on OpenClaw · Medium — March 2026 Model Showdown · BuildFastWithAI — 12+ AI Models in March 2026 · Fortune — OpenClaw in China · The Neuron — AI on March 25

Enjoy Reading This Article?

Here are some more articles you might like to read next: